@kushagharahi/pi-llama-extensions

Pi extensions for llama.cpp router — auto model discovery and tokens/second display

Package details

Install @kushagharahi/pi-llama-extensions from npm and Pi will load the resources declared by the package manifest.

$ pi install npm:@kushagharahi/pi-llama-extensions- Package

@kushagharahi/pi-llama-extensions- Version

0.1.0- Published

- Apr 26, 2026

- Downloads

- 161/mo · 161/wk

- Author

- kushagharahi

- License

- unknown

- Types

- extension

- Size

- 107.7 KB

- Dependencies

- 0 dependencies · 2 peers

Pi manifest JSON

{

"extensions": [

"./extensions"

],

"image": "https://raw.githubusercontent.com/kushagharahi/pi-llama-extensions/refs/heads/main/screenshot.png"

}Security note

Pi packages can execute code and influence agent behavior. Review the source before installing third-party packages.

README

Pi extensions for llama.cpp power users

Features

- Configure models only in llama.cpp

- Auto model discovery in router mode -- you no longer have to duplicate what's in llama.cpp's

models.iniin pi'smodels.json - Takes the first model as the default on load

- Auto model discovery in router mode -- you no longer have to duplicate what's in llama.cpp's

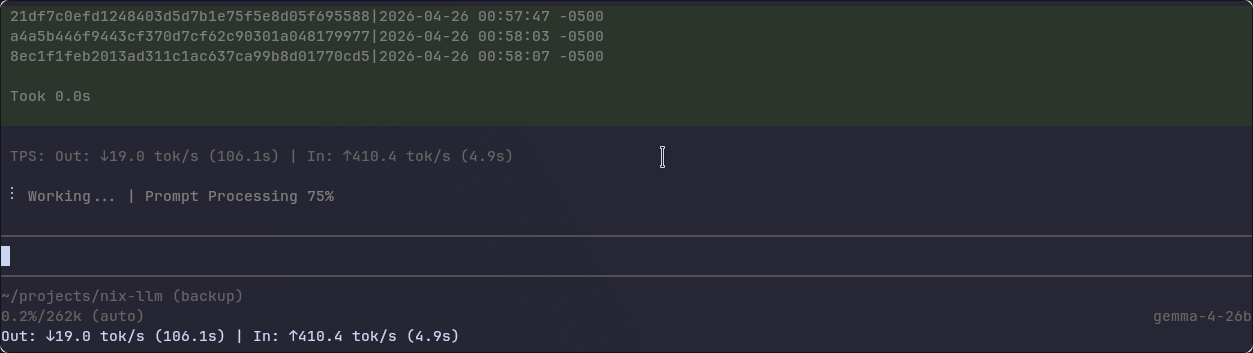

- Performace metrics in the Pi TUI

- Tokens/second display

- Prompt Processing % display

Quick start

pi install npm:@kushagharahi/pi-llama-extensions

models.json config for auto model discovery in router mode

Ensure that you have the following config for auto model discovery from llama.cpp's router mode. The two pieces of important info are the provider key llama-cpp and the model array having one "id": "llama-cpp-discover".

models.json:

{

"providers": {

"llama-cpp": {

"baseUrl": "http://127.0.0.1:8080",

"api": "openai-completions",

"apiKey": "local",

"models": [

{ "id": "llama-cpp-discover" }

]

}

}

}

The extension will then autofill things like model id, name, contextLength, maxTokens.

Debug

Set LLAMA_CPP_EXTENSION_DEBUG=1 to enable verbose logging. Each extension writes to its own file:

| Extension | Log file |

|---|---|

| Auto Model Discovery | /tmp/llama-cpp-auto.log |

| TPS Display | /tmp/llama-cpp-tps.log |

TPS Display also writes progress events to /tmp/llama-cpp-tps-progress.log.